Supporting IFRS17 portfolio cash flow modeling and simulation

Corios has been busy lately supporting our client’s actuaries who are implementing the IFRS17 standard on their set of insurance portfolios. The purpose for this engagement is to better estimate the Value at Risk (VaR) on their portfolios’ liability for remaining coverage (LRC) and liability for incurred claims (LIC). LIC in turn includes both the liability for claims reported but not fully reserved, and for those claims incurred but not yet reported. The approach we and our client are following uses a cash flow projection analysis that is conceptually similar to the way our banking clients model future cash flows for secured and unsecured lending portfolios.

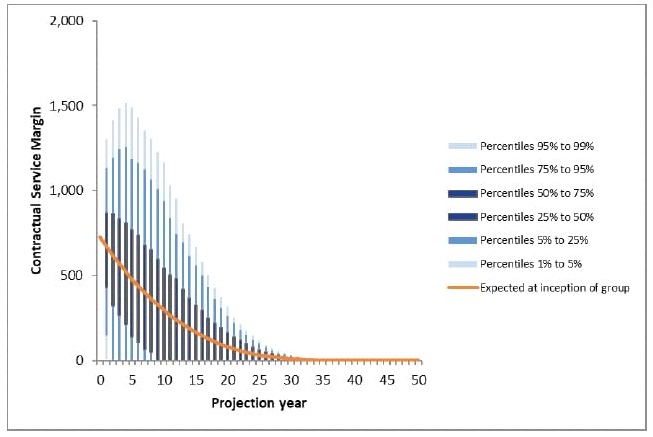

A chart that depicts the business problem we’re solving appears below (source: Moody’s Analytics). The insurance portfolio’s Contractual Service Margin (CSM) is a function of several cash flows, predominantly including premiums (income), claims payments, claims recoveries, claims expenses, and non-allocated expenses, which are aggregated into CSM (i.e., the Y axis). Most of those cash flows take place over time (i.e., this is the X axis), and are subject to the likelihood of a claim event incidence and its severity (e.g., these are some of the primary stochastic terms, which form the range of possible outcomes, from which the percentiles are calculated).

In this engagement, our challenge is to transform our clients’ actuarial science approach, originally designed using spreadsheet and Python-level analysis, and scale it to a) a more granular level: expanding from portfolio and tranche-level analytics down to handling individual insurance contracts, and b) high-intensity Monte Carlo approaches for simulating a wide range of potential risks (aka stochastic simulation).

Although IFRS17 does not apply to insurance carriers based in the US and Japan, they are still of interest to carriers who operate portfolios in other countries. Furthermore, this sort of simulation approach isn’t restricted to insurance portfolios subject to IFRS17. These approaches also apply well to bank risk management teams who model probability of default (PD) and loss given default (LGD) under the CCAR and CECL methodologies. The CECL requirement for estimating losses over the life of the loan (i.e., not only losses incurred by the reporting date, but also those losses not yet incurred) is similar in concept to elements of the IFRS17 approach for LRC and LIC.

The compute challenge

One way of looking at this is as a compute-intensive challenge. The actuarial approach uses a bottom-up approach, working from the individual contracts, estimating their future cash flows over time, and evaluating the range of future risks for claim incidence and severity for each contract over a range of simulation trials. Instead of estimating average and VaR exposures at the portfolio level, or for tranches of the portfolio, we need to compute this for every individual contract, from among many thousands of contracts in each direct and reinsurance portfolio. We also have to estimate VaR using tens or hundreds of thousands of simulation trials for each contract, and repeat this for every reporting period, for potentially tens to hundreds of simulation model packages. That represents potentially trillions of data points for each model package.

This article focuses on the compute architecture strategies that made the solution to these compute-intensive challenges feasible, more than on the IFRS17 policy implementation or the actuarial science strategy selected by our client. Corios has already built substantial experience with cash flow modeling (using both traditional and SAS/IML coding approaches), with high performance computing (SAS HPA on Teradata and Greenplum, in-database, in-memory), with open-source (NumPy, Python and Pandas) and open-source Hadoop-based distributed computing (Spark and Dask: see related blog post), and with the comparison between SAS 9.4 and SAS Viya. In this project, we had the opportunity to put all these techniques to work jointly.

Compute architecture strategies

-

SAS vs. the client’s incumbent approach (spreadsheets and Python)

-

Conventional SAS coding vs SAS/IML

-

SAS 9.4 vs. SAS Viya

-

SAS Viya vs. Spark

SAS vs. our client’s incumbent approach (Python/spreadsheets)

Traditional SAS coding approaches vs. SAS/IML

Modeling a series of cash flows takes place by portfolio/tranche/contract, by reporting date (in this case, quarterly), and by historical and projected cycle date (usually on a monthly interval, somewhere between 12 months to 60 months into the future). Given this, one might think you would set this up as time series data (i.e., one record per contract-month) with the portfolio/tranche/contract attributes set up as cross-sectional attributes. However, this is a terrible design, because of several factors, the largest of which is that network speeds and disk read/write speeds are MUCH slower than in-memory. As a result, the best approach is to keep all metrics for each contract in memory if you can, and the solution to that is either data step arrays or IML matrices.

Looking at the tradeoffs between traditional SAS coding and IML, both approaches work. It comes down to your preference of coding styles, whether you have experience writing in IML-style syntax, and whether your workload requires you to use functions that only exist in IML.

If you know SAS data step coding and you don’t know IML, you can achieve everything we did in this project with data step coding. There were no technical requirements in this project that would force us to use IML functions or capabilities, and in my experience, IML familiarity is limited to fewer than 5% of the SAS programmer universe.

SAS/IML is very handy if you love NumPy, since many of its coding conventions are very similar. You will probably find IML’s programming design to be fairly Pythonic, as I have found. In NumPy, most objects are arrays (specifically, ndarrays), and Python provides support for additional data objects such as tables, lists (or dicts or tuples) and variables. In comparison, IML directly provides object types for matrices (same as ndarrays) and lists; and Viya provides support for CAS vectorized calculations and in-memory CAS tables. IML is reliable and well designed, and Rick Wicklin at SAS, a senior developer for IML, is also an amazingly prolific and gifted writer on all topics, especially on applications of IML for business and scientific use cases.

SAS 9.4 vs SAS Viya 3.5

Now that we’ve addressed the options for which SAS language variant we selected to develop our code, we ran some comparisons between a conventional SAS 9.4 workspace server approach, and a SAS Viya 3.5 approach leveraging CAS. There is also the approach of using the SAS Programming Run-time Engine (SPRE) on Viya, but it is so functionally similar to a SAS 9.4 workspace server that it’s essentially the same approach.

Our results show that modeling stochastic cash flows works in either platform, but it is easier to distribute the work using Viya, and easier to add additional workers to the cluster. Most of the cash flow modeling work I’ve done in the past has all been completed on SAS 9.4, and it’s a reasonably high performance option as well.

Advantages of using CAS include two major advantages with respect to computational distribution and scale.

-

First, it’s very cool that the SAS data step, implemented in CAS, allows you to transparently distribute your compute processing across CAS workers with a minimum of effort, as long as your workload follows some basic rules–and some of those rules are really important. I’m not going to address all those rules here, but there are some big changes with respect to SAS macros and data table record row order.

-

Second, IML in CAS introduced the map-reduce and parallel-tasks capabilities; we used map-reduce with our IML code and it appeared to “just work”, which was glorious, since achieving the same thing in Spark or Dask on Hadoop clusters can be fiddly at best.

-

CASL: There is a new code variant under CAS for workload orchestration and task launching, called CASL. You use CASL in addition to / instead of SAS macros; as mentioned above, you need to be very careful about how you use SAS macros with CAS actions; you will be able to borrow a lot of what you know from traditional SAS when you are developing code in Viya.

-

Data row order: You also need to apply what you may already know from high performance computing (HPC) about physical partitioning of data records, and the absence of a persistent record order in a table. The SAS data set concept of the implicit row counter (i.e., “_n_”) simply doesn’t exist any more, and you need to create an explicit row ID, such as what you may often create in a database context or when you need to create a UUID.

-

Code development user experience: We found SAS Studio to be a fairly effective IDE, and its integration with Git is pretty useful and reliable. In our case, Azure was the dev-ops platform we were provided. We found that the way Azure exposes git repos and branches to third-party applications, such as SAS Viya, made the use of Azure-based git implementation for Viya a little kludgy. Also, none of the built-in Azure DevOps IDEs support SAS code natively, so tying our progress in SAS Studio to our project tracking in Azure DevOps for user story and bug resolution was a manual process.

SAS Viya distributed processing vs. Spark

For this engagement, we used a SAS Viya CAS cluster consisting of a controller and 5 workers, each with 8 cores, and this worked pretty well. Our primary challenge was reminding the wide range of developers on this environment to write their output files to the database or to the Azure blob storage, rather than to the local storage on the SAS cluster.

We didn’t explicitly use a Spark or Hadoop architecture in this project, but we’ve used Spark extensively in other projects, and we maintain a Spark stack for PySpark and Dask using Amazon EMR in our own shop. Most Spark examples we found in the domain literature for Monte Carlo analysis go deep on the architecture and light on the business domain. However, of the useful articles we did find (here, here and here), we found quite a few parallel observations from other practitioners who’ve used Spark to solve the same computational problems. Like us, they found that:

- There was a cap on the number of iterations you can complete with a fixed number of workers and threads (unless you follow our approach for chunking described below).

- They also found that quantile calculation (to calculate the final step in the VaR simulation) was a major consumer of compute resources.

- Because these computational problems typically involve fully-populated data tables with predictable number of levels per business dimension, it makes more sense to design your data model using array or matrix objects (i.e., NumPy arrays, and IML matrices), than it does to use open-ended or sparse-optimizable structures like Spark RDDs or SAS hash objects.

Our IFRS17 project involves many calculations across many objects on many dimensions, as you would expect to see in a portfolio cash flow analysis. As a result, the timings for many “Hello World” Spark for Monte Carlo projects, where there is a single stochastic term in the model and very few business entities (aka, one-dimensional), probably don’t compare well with the complexity of this project. In our case, where we had 10-12 stochastic terms, hundreds of assumptions terms, and hundreds of thousands of business entities, we had to optimize our compute architecture for a relatively intensive computational, in-memory and persistent storage requirement.

Compute design patterns

-

Data gathering

-

Random number generation

-

Calculating metrics

-

Calculating quantiles

-

In-memory, distribution and multithreading

-

Chunking records and iterations

-

Writing persistent results

Data gathering

When our workload starts, we gather insurance contract attributes and scenario assumptions for each element of the cash flow model. We will create the simulation trials dimension and the future time periods of the simulation at run-time, so the data are relatively compact when we get started. The best way to get started is to integrate all our source tables first, using partitioning and hashes. Whatever you do, avoid performing joins from inside a Monte Carlo iteration. Once we start iterating, we want as much of our data to be in-memory, not on disk or reaching across the network. Assuming that most data gathering and integration will be done using a database, then we prefer a SQL approach, augmented with database table indices if required.

SAS Viya provides a facility called FedSQL which moves many SQL operations into memory, which offers an optimization compared to traditional SAS 9.4 Proc SQL or in-database approaches.

Assuming that we’re going to move the work across CAS workers in our cluster, it may be useful to use a physical partitioning on the data files, so that we can optimize movement of the data across the workers and cut down on latency related to data movement.

Random number generation

Random number generation in SAS is a pseudo-random process, because the generation is rarely “truly” randomized. Most pseudo-random number generators start with a seed value and then rely on extremely long lists of random numbers from one of many potential distributions. This process was dramatically improved in SAS Viya, where the presence of more high quality pseudo-random number generation techniques, using approaches like the Mersenne Twister, are more broadly accepted by actuaries and scientists who rely on conditions are close to purely random as can be generated.

Metrics calculations

If you are using IML, then rely on vectorized calculations as often as possible. IML may otherwise rely on cell-by-cell calculations when building a calculated column from source columns from your matrices, and this should be avoided.

If you are using traditional SAS data step coding, then keep all the data for a single contract in the Program Data Vector (PDV). One way to do this is by using arrays, where you can stipulate that the array will have one element for each time period in historical and projected time by cycle date; or it should contain the entire actuarial triangle by using two more array dimensions.

Traditional SAS data step coding also provides other in-memory objects such as hashes and formats which can be helpful for lookups, which may even help you avoid running a new calculation.

Computational performance measured in wall clock time will be linear relative to a unit increase in the number of contracts and iterations, and sub-linear relative to an increase in CAS cluster workers and threads. It takes time to move data to and from workers, and there is almost always a measurable (but not large) overhead tied to using more workers in a cluster.

Even if you add more workers and more memory to each worker, there is a finite capacity of the size of the problem you can solve, which is typically tied to the overall memory within and across the workers. Viya CAS is an in-memory compute engine; it picks up a lot of speed compared to SAS 9.4, by loading data and compute cycles into memory. However, at some point you will need to write your results back to disk for persistence or for caching, and you’ll be back in the to disk and network speeds again.

I mentioned above you should keep all attributes and metrics for a single contract in the PDV–meaning, keep all SAS variables for one contract inside one table record. Another way of saying this is, avoid writing attributes for a single contract across CAS table records. With cash flow calculations, some operations refer back to previous time periods, or in some cases, due to actuarial triangles, refer to other cells in a table or across triangles. The time required to look back at a previous record (especially if this is done recursively) will be a large penalty on performance. Furthermore, with CAS (as with many HPC platforms), lags and leads cannot be (easily if at all) calculated, because records from a single source table may be distributed across workers and threads. Even something as conventional as the SAS implicit record counter doesn’t exist in CAS.

Quantile calculations

While the code needed to calculate a quantile (e.g., a percentile, a decile, etc.) looks really simple, the actual calculations involved are more complex than at first glance. This is an even greater challenge when working with exceptionally large data volumes. Behind the scenes, what the quantile operator is performing on your behalf includes a sort of all records, a count of records, breaking of ties, then selecting a record at the threshold and looking up its value. It is best to perform the quantile calculation as late as possible in the process, and where possible, either use aggregation or sampling to reduce the number of records involved, especially if your metrics are well behaved (i.e., not sparse on the tails where VaR is to be calculated, relatively few ties, etc.).

In-memory

There are unquestionable benefits to computing these stochastic simulations in-memory as opposed to reading data from a remote database, sending that data over the network to an I/O-centric computing platform and writing the results back to disk. The availability of such large volumes of memory on cloud computing platforms is what makes this possible. However, there are ceilings to this capacity, and you will eventually need to dump what’s in-memory to disk. More important, memory is more finite than disk, so you need to be more efficient with the memory you have available than if you could simply use a disk-centric style of programming.

Parallelization, distribution and multithreading

As mentioned above, being able to distribute work in parallel to machines that have multiple cores and multiple threads is an enormous luxury compared to days of old, when, if it didn’t fit on the disk of the local host, you were in trouble. However, your workload needs to be amenable to either splitting tasks into asynchronous pieces, or the internal tasks of your work needs to be parallelizable.

Fortunately, our use case for a stochastic simulation of the cash flows of an insurance portfolio falls into the category called “embarrassingly parallel”, because every insurance contract is mathematically independent of every other contract. Furthermore, every Monte Carlo simulation iteration for each contract is functionally independent of every other iteration.

We can leverage that directly in the design of our workload, using built-in functionality like the CAS IML map-reduce function, which splits the work across workers and threads on workers. However, because of the cap on available memory to each worker and its threads, some problems may need to be manually subdivided further. For this reason, for our client’s portfolios, we developed a “chunking” mechanism that split groups of contracts into tranches, and groups of iterations into what we called “SimBlocks” (or blocks of simulation iterations).

For instance, if we have 10,000 buyers, we can break the computational design into 10 tranches of 1,000 buyers each, and by doing so, fit the problem into the available compute resources. We also break iterations into SimBlocks because the output of each iteration is independent of any other iteration for that buyer. Consequently, if we specify 100,000 iterations, we can break them into 200 SimBlocks of 500 iterations each.

Writing persistent results

As mentioned above, SAS Viya offers a CAS in-memory SQL engine called FedSQL, which optimizes the aggregation of tranches and SimBlocks. We may not need to write the lowest-level grain of data to disk for subsequent analysis and optimization of the portfolio’s performance. We might instead be able to aggregate the results of a block of simulation iterations for an entire tranche, such as, if our analytic requirements are based on the outcomes of sums and counts. However, quantile calculations at the iteration-contract level will not be satisfied by a pre-aggregation approach. Hence, care needs to be taken in how quantiles get calculated.

At each stage of intermediate aggregation, we write those intermediate result sets to disk (either to a database table, or to Azure blob storage in parquet or orc format), and then we clear the the in-memory cache for that SimBlock and tranche, to make room for the next SimBlock + tranche combination.

I’ve run across examples of asymptotic quantile calculations that can be calculated in blocks and updated similar to the way that a Bayesian prior gets updated by an observation to produce the posterior probability. However, we haven’t used that approach yet in this engagement.

Conclusion

This has been a rewarding and fun engagement, since it’s challenged us on several analytical and technical fronts, and we had the opportunity to integrate several techniques and strategies, including analytics architecture, design patterns, and actuarial science.